Thread replies: 95

Thread images: 63

Thread images: 63

File: 007_Chapter_0.jpg (1MB, 1200x1718px) Image search:

[Google]

1MB, 1200x1718px

Hello /h/! I usually never post in here but I read a hentai manga a few years ago that I really liked but I never saved it or happened to remember its name. It was about a boy who lived in an apartment and his neighbor was a girl and there was a small peekhole between their rooms. The girl caught him looking into the peephole one time and she calls him out on it, but she said her only condition was that he keep the hole and she could spy on him. I dont remember too much more, but in return I will dump something!

much appreciated

>>

OP here, how come it's not uploading my files?

>>

>>4092416

nozoki ana

it's not really hentai though

>>

never mind, i got it

>>

OP What's the name of the hentai you used as image?

>>

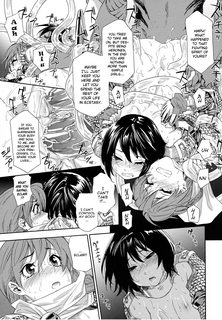

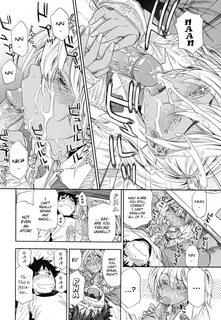

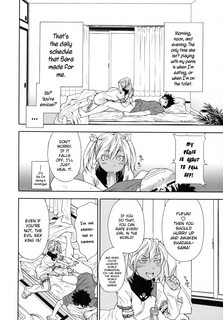

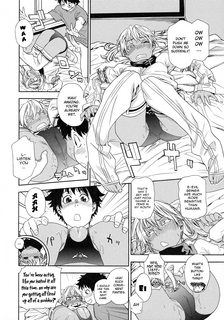

sorry. I am dumping [Yamatogawa] Power Play!

>>

>>4092424

Power Play

>>

and thank you for the helpful info, anon!

>>

dang i didnt realize dumping was so slow lol

>>

File: 031_Chapter_1.jpg (729KB, 1200x1719px) Image search:

[Google]

729KB, 1200x1719px

>>

File: 040_041.jpg (2MB, 2419x1730px) Image search:

[Google]

2MB, 2419x1730px

>>

File: 045.jpg (1MB, 1200x1728px)

1MB, 1200x1728px

>>

File: 049_Chapter_2.jpg (847KB, 1200x1722px) Image search:

[Google]

847KB, 1200x1722px

>>

File: 053.jpg (727KB, 1200x1722px)

727KB, 1200x1722px

>>

anyone here? if not, i will take a break after this chapter and resume later

>>

File: 060.jpg (893KB, 1200x1721px)

893KB, 1200x1721px

>>

File: 067.jpg (936KB, 1200x1727px)

936KB, 1200x1727px

>>

>>4092510

Generally, there's always lurkers. Most just don't comment.

>>

gotcha, either way, ive been posting for close to an hour so i will take a break

>>

>>4092537

/h/ is a traditionally slow board. most people that come here dont realize that threads here last weeks and responses may be days apart. People are always looking at your stuff

When i dump i dont just focus on getting those images out. just put up the next one when you think about it.

>>

>>4092416

i know what you talking about op sadly cant remember the name but the good news is that it already got animated i have it somewhere in my downloads so i may be able to give you the name, just let me check

>>

power play by yamatogawa

>>

[140328][ピンクパイナップル]フェラピュア ~御手洗さん家の事情~ THE ANIMATION 「朝から晩までしゃぶってあげる」

not so sure about it but i think i found it op

>>

>>4092537

Been a long time since I've seen Power Play. Good on you, dumper.

>>

>>4092989

op is talking about nozoki ana, everyone knows what it is

>>

Pretty cool. Much appreciated. :)

>>

>>4092427

I fucking love Power Play.

>>

Thanks for reminding me of the second most feel hentai I ever read.

>>

>>4092416

Ana Satsujin

>>

Could I get a link to this in english please? I can't find anything in english. In return I will post something when I come back in around 30-45 minutes.

>>

>>4095531

http://g.e-hentai.org/g/697532/9a147c4035/

>>

>>4095573

Ty. Now as promised, I will post something although I don't know what yet.

>>

>>4095626

Whoops. Just remembered I deleted most of my hentai folder. Do you guys mind taking something from /d/ or /aco/ as my contribution?

>>

looking for a weird hentai manga, forget almost everything about it since its been like 5 or so years.

but i do remember it had weird fat orc monsters and they had a bunch of women in cages, and feed them like pigs, and there was a pregnant one, maybe one of them was a princess or a knight?

i dont normally jerk of to hentai so i have nothing to post sorry, just for some reason remembered this and wanted to find it

>>

>>4094055

You've read something that gave you more feels than that? No way. I had to stop halfway through and come back because my chest was hurting.

>>

>>4095965

My biggest feel hentai was "May Not Miss Pervert Fall in Love?" Yeah, I have poor taste.

>>

>>4095965

Believe Machine and stories by Makio gave me more feels.

>>

>>4095573

because fuck logins, and fuck applications, wrote a dlscript

no deps beyond standard tools; wget, bash, readarray, grep, curl, etc

assumes url is first gallery page, names files with just page number, and tosses everything into whatever directory you're running out of

also "parallelizes" the download by spawning 40 fucking wget subprocesses, because why the fuck not

#!/bin/bash

if [[ $# -ne 1 ]]; then echo "Need g.e-hentai url" && exit 1; fi

declare -a gallery

declare -a imageURLs

# < <(stuff) is because if I had just piped readarray, the pipe would run it in a subshell. This is a workaround

# gets all the gallery pages

readarray gallery < <(curl $1 2>&1 | grep -o -E 'href="([^"#]+)"' | cut -d'"' -f2 | grep /g/ | grep -v -E .*\.xml | sort | uniq)

echo "fetched pages:"

for page in "${gallery[@]"; do echo "$page"; done

for page in "${gallery[@]}"; do

echo "reading url: $page"

# gets all the pages from each gallery

readarray imageURLs < <(curl $page 2>&1 | grep -o -E 'href="([^"#]+)"' | cut -d'"' -f2 | grep /s/)

for URL in "${imageURLs[@]}"; do

# gets the page number from the URL, to use as the name of image

num=$(echo "$URL" | grep -o -E "[0-9]{0,}$")

# gets the image URL from the page, and downloads it

wget $(curl $URL 2>&1 | grep -o -E 'src="([^"#]+)"' | cut -d '"' -f2 | grep -o -E "http://[0-9]{2}.*") -O $num -q &

done

wait

done

echo "done"

>>

>>4097403

>when /g/ goes mad

>>

>>4097403

Saved for testing later

10/10 if it works

>>

>>4097403

>for page in "${gallery[@]"; do echo "$page"; done

fuck me, I wrote in that line just for output noise, without actually checking it

should be

>for page in "${gallery[@]}"; do echo "$page"; done

(forgot the closing brace)

otherwise, tested only on powerplay

>>

>>4097665

ran it on Vanilla Essence, and it turns out g-e likes to link over to alternative copies of the manga

so fuck them, it's fixed

#!/bin/bash

if [[ $# -ne 1 ]]; then echo "Need g.e-hentai url" && exit 1; fi

declare -a gallery

declare -a imageURLs

# < <(stuff) is because if I had just piped readarray, the pipe would run it in a subshell. This is a workaround

# gets all the gallery pages

# for some reason g-e likes to link to alternative copies of the manga as well, so galID and the final if statement just checks that we're only looking at the copy specified.

galID=$(echo $1 | awk -F '/' '{print $5}')

readarray gallery < <(curl $1 2>&1 | grep -o -E 'href="([^"#]+)"' | cut -d'"' -f2 | grep /g/ | grep -v -E .*\.xml | sort | uniq | awk -F '/' '{if ($5 == '$galID') print $0};')

echo "fetched pages:"

for page in "${gallery[@]}"; do echo "$page"; done

for page in "${gallery[@]}"; do

echo "reading url: $page"

# gets all the pages from each gallery

readarray imageURLs < <(curl $page 2>&1 | grep -o -E 'href="([^"#]+)"' | cut -d'"' -f2 | grep /s/)

for URL in "${imageURLs[@]}"; do

# gets the page number from the URL, to use as the name of image

num=$(echo "$URL" | grep -o -E "[0-9]{0,}$")

# gets the image URL from the page, and downloads it

(wget $(curl $URL 2>&1 | grep -o -E 'src="([^"#]+)"' | cut -d '"' -f2 | grep -o -E "http://[0-9]{2}.*") -O $num -q &) || echo "$URL"

done

wait

done

echo "done"

>>

>>4097689

What exactly should I do with this to download something?

I'm more or less a noob, so in English please.

>>

>>4097737

If you need to ask, it would take too long to explain.

Go visit /g/ and don't come back until you have become a true believe of gentoo.

>>

>>4097737

you need to be on a *nix system (mac or linux distro)

copy and paste everything after #!/bin/bash into a text file

name it whatever you want, with a .sh extension

run "chmod +x name.sh" (to give execution permission to the file)

then run "./name.sh http://g.e-hentai.org/g/865306/11c6e80985/", and it'll download every image to whatever folder you're currently in

replacing the URL with whatever gallery you want, obviously

to be clear, this would all be in the terminal

>>

>>4097872

and if you want to verify the evility of my work, you can plug shit into http://explainshell.com

but basically, curl gives the html output, and wget downloads files

and every other thing happening is just parsing the curl output (html) for the links I want

and then it gives the final image links to wget to download

>>

man i really enjoyed it!!

Title: Power play!

Thread posts: 95

Thread images: 63

Thread images: 63