Thread replies: 124

Thread images: 20

Thread images: 20

File: 1445601354587.jpg (98KB, 430x350px) Image search:

[Google]

98KB, 430x350px

Now, we are all familiar with the paradox of Theseus' Ship, or Grandfather's axe as it is known to some. The dilemma when original ceases to be original when parts are being replaced.

Now with the modern medicine and science, we can replace most human parts. When do you cease to be the original you and become a new you? Brain seems to be the final frontier in this case, as we have no idea how it works or how to replace it. And is it even replaceable. Is our mind the product of our brain, or is some ether from the outerworlds?

I know SJW's and nu-newwave feminists get a lot of shit in here (and most of their arguments and ideas are plain silly). But they do raise a bigger question on what does it mean to be me or even human.

Will this be the century that we will see first non-human humans. Shallow and flawed mind of the human, living in a body perfected by the machines?

>>

Jesus christ you are retarded.

The paradox of Theseus' Ship can not ba applied to any living self regulating organism. The human body knows when a part of it does not belong. It has means of accessing this and it spits out shit that shouldn't be there.

>>

Congrats we don't really exist in any essential, discrete way and the ground of being is nothingness, you're officially a buddhist

>>

The one that really fucks with my mind:

It's the future. We have the technology to completely create people artificially, from scratch. A clone of is made of me, perfectly cloned in the physical sence. Then the entire contents of my brain is copied to a computer, and inserted into the clone. What happens to my frame of reference? Will "I" experience the world from two bodies now, myself and the clone? Who is truely "me"? Both the original me, and the clone? The clone is so perfect that not even my family can tell the difference. Am i not then the clone? Can i see the world through his eyes? Fuck.

>>

>>590387

No, you will die and cease to exist. The clone of you will be recognizable to everyone as you but you yourself will not be conscious of this, because you will be dead. You do not experience what the clone experiences.

>>

Humans have souls. That can never be replaced

>>

File: 1446484353155.png (26KB, 1187x846px) Image search:

[Google]

26KB, 1187x846px

>>590582

>Humans have souls.

>>

>>590335

>The human body knows when a part of it does not belong. It has means of accessing this and it spits out shit that shouldn't be there.

Except the human body is constantly replacing cells, and none of the skin you have today is the same skin you had when you were born, so it's perfectly equatable to Theseus' Ship

>>

>>590317

This is essentially a non-problem though OP, because if you actually knew more about philosophical problems you would know about the concept of a philosophical zombie, i.e a human body that acted and seemed like a complete human for the observer, but in reality has no individual thoughts or experiences of it's own, it just "is" in the same way an animal is.

Also:

>Is our mind the product of our brain, or is some ether from the outerworlds?

Is ridiculous, because of course the mind is the product of the brain.

What do you think happens to Alzheimer's patients? That the reason they lose the ability to recognize tools is because their soul is leaving the body?

>>

>>590615

Bullshit

Look up basal membranes

We have a bunch of cells that rise up and replace the dead cells and those that abrade off

That's why old people have that stretchy skin and why corrective eye surgery is shit - if you live long enough removing those corneal cells will mean your cornea ends up too thin to function and clouds up

All this crap came from physicists who don't understand bioloigy

>>

>>590317

continuity of identity

>>

>>590317

There are two answers to the ship of Thesus and both are correct.

Heraclitus explains it by telling us the universe is in a state of flux, there is no fixicity. If you can't step in the same river twice you can't be the same 'person' you were a moment ago. Thus transitioning from being more machine to less human is just one of the infinite manifestations of the flux.

Aristotle emphasizes functionality saying that the ship that was designed to carry the heroes across the sea was one item. The ship that was designed to sit in a museum was another item and whether that ship is altered or not does not change it's status because it's function is still the same. In other words if I replace my weak human legs with cyborg legs the function of the thing has not changed, I have simply been upgraded. However if I replace them with machine guns than the function has changed and I have become a new item entirely.

>>

>>590317

I prefer to think of it as Trigger's Broom.

>>

>>590317

>>590706

To add to the dilemma, what if the old replaced parts were reconstructed once more to be exactly the same before the initial replacement took place.

Both the new and improved ship of Theseus and the reconstructed old ship of Theseus functioning properly.

A buddhist answer is both can claim the identity of "ship of theseus" because they are both correct. However ultimately, neither of the ship have any "essence" to their existence. This could apply to humans or any thing in general. The false essence of what we know as the ship can usually be boiled down to combination or specific parts of the thing. In this case, the function of the ship, the timing, and the name. In humans, it would be the memory, senses, body, etc.

Ultimately the nature of identity is not a solid construction; you can change the base structures, the name, and even the function, and in general would remain the "same" ship.

Suppose the ship of theseus had slowly upgraded itself and survived today with hundreds and thousands of upgrades throughout its "existence". It would still technically be the ship of theseus, if the general continuum was there. The new ship of theseus may have nuclear engine and titanium alloy and might be in space, but it would still be the "same" ship, if nothing else complicates the matter.

>>

>>590532

this, although if the clones brain was a perfect copy, he would think that he was you and that your consciousness did transfer, and he would be you in literally every way except he wouldn't have the same consciousness/soul or however you want to phrase it.

>>

>>590387

Who says you can copy brains to computers? They're not really the same, only superficially.

>>

>>591790

It doesn't matter if computers aren't 1:1 same as brain. As long as the functionality is same, it will happen.

You can't say an airplane can't fly because it doesn't have a bird wing. It doesn't flap but the concept is still relatively the same.

>>

>>591798

A bird's flight is very different than a plane's flight. For instance it's ability to reverse, to make turns and adjustments.

With something as dramatic as a brain there would be even more descripencies, enough to basically make a computer not able to fufill the functions of the brain.

Alex Kirkeegaard pretty well summarizes why computers will not be able to do duplicate brains (at least not without a non-binary programming lanague). Computers are based on absolute and fixed values such as floats and bools. While the human brain is based on more flexible and relative values. Truth is not Boolean in the human perspective. We had several decades of philosophers trying to treat truth as Boolean. Wittgenstein ultimatly destroyed that idea by first creating the idea of atomic facts (a necessary concept for Boolean truth) and than later showing why the concept is shitty.

>>

>>591931

Neurons fire or they don't. There is no in between.

Also both airplanes and birds fly due to understanding/adaptation of fluid mechanics

>>

>>590317

>>590615

Neither the body nor the brain are static. Nevertheless, your consciousness remains. It does not disappear and get replaced by someone else's. Thus, I think the question you need to look at is 'what determines consciousness?' This creates some important follow on questions. Why does my consciousness reside in my brain and not someone else's? Why did I happen to be a person and not an animal or an inanimate object? Is consciousness encoded by brain structure and if so, how does that fit in with the fact that brain structure is constantly changing? Is it encoded by a more general pattern of the brain's structure? Can computers become conscious? I don't personally believe in a soul defined as 'an immortal consciousness' - before you were conceived you were not conscious; when you are knocked out, you're unconscious. A soul doesn't jump out and continue consciousness. That said, I struggle to believe that if someone created a brain that mimicked the structure of yours it would somehow contain yours. For one you have the difficulty of defining the structure of someone's brain when it constantly changes. Secondly, wouldn't identical twins be as best an example of this experiment as anything else?

Some more thought experiments to think about:

>When you are teleported, all the cells in your body are destroyed then remade. Would the remade you have your consciousness, or would yours have been destroyed?

>If half of two people's brains are stitched together, what happens to the consciousness? Is a new composite one created?

>>

>>590317

>When do you cease to be the original you and become a new you?

You don't, as long as your memory remains. Human minds are a series of psychological states linked over time by memory. If there exists a future being that has your memories and experiences, it's you. Whether that being is organic or machine.

Now, the real question is, what happens if instead of being replaced with robot parts, you instead create a robot duplicate? They can't both be you, because they are not the same. Well, here's my theory on that: Neither are "you", in the sense that you are not "you" five seconds ago, and you are not "you" five years ago. But you are still psychologically continuous with those other, older, "you"s, so you can still be called "you". The same would go for the duplicate, it can also be called "you", even though it doesn't have exactly the same experiences, because there was once a moment in time where you did (when it was created).

>>

>>594019

Welcome to Buddhism

>>

File: a revolutionary thinker.jpg (116KB, 1279x727px) Image search:

[Google]

116KB, 1279x727px

So hold up,

You guys are telling

What

You're saying...

I've changed. Alot.

>>

>>594834

Stop answering everything with Buddhism.

>>592346

Yeah maybe I (OP) was bit unclear. But I meant that what makes me "ME".

This is something that has been debated for long ass time. But I feel like we are actually scarily close to the situation where we can replace most functions of human, leaving behind the old and going forth with the new. And most of this comes from improved medicine, our understanding of dna/genes and in we are rapidly advancing in the fields of nano sciences.

I mean cloning sheep was huge moral dilemma for some us. Surely dilemma of what consists of you will be even greater problem in future. Not purely from philosophical perspective, but lawful implications this has.

>>

>>595660

>what makes me "ME".

The fact that you can say that you are you. Only you have your experiences. No one else can experience your perspective.

And that's it. There's really nothing else that makes you who you are. It's just your first-person perspective and the experiences you have through it, chained together by your memories.

This means two things: If you were replaced bit by bit with robot parts, yeah, you'd still be you.

Two: if you were cloned, no, your clone is not you, because they don't have your experiences. They can't see themselves stepping out of the clone vat from your perspective. They don't remember ordering the cloning machine and paying for it to be delivered, and thinking about how neither of you will be virgins anymore. No, that clone has its own thoughts and perspective: it can't be you.

>>

>>590387

I suppose your question would be answered by how the mind was transferred.

You could either transfer the supposed soul/ethereal mind into another's brain and then you'd be viewing yourself from two people I guess, assuming a soul can be copied to another brain or "wired" to connect to two brains.

And then there's the identity theory side that argues the brain/mind are the same thing and therefore you would be you and the clone would just be a copy.

>>

>>590387

I read a book on this, I think it was called mindscan, where people who were dying could transfer their mind into a robot clone. the original would then be sent to some retirement home to await death on the moon or something while the clone continued on earth to live pretty much forever. One of the major plot points of the book is that the family of one of the people who did this procedure gets pissed off because they don't get their inheritance and so take the clone to court. They argue that since the original has now died on the moon, and the clone is not the original then they should get the money. The whole court case is this argument about whether or not the clone is the individual and deserves to keep the money.

>>

>>595840

So if I'm getting this right, he's saying that the mind can't simply be a string of experiences because it can assess the fact that it is such a thing, and thus there must be some higher state of consciousness that recognizes experiences and analyzes them, etc.?

And so this higher consciousness state, "transcendental apperception", is the only thing that defines us as a "self" because it allows us to recognize that our experiences are ours and not just a series of disorderly events, and acts as a constant factor that ties everything together?

>>

>>595763

but what if you cloned your consciousness and memories as well so that the clone has access to your experiences and has memories of ordering the cloning machine and not being virgins anymore? You would both have the same starting point of reference in regards to experience.

>>

>>595888

But you would not have the same experiences after that point. You're still two different beings with different experiences. You'd be seeing each other face to face, shaking each other's hands, going your separate ways, maybe one tries to kill the other. You have your own separate minds and thoughts. From the second that clone exists it's making new memories you never had, and you're making new memories it never had.

Now, I suppose if you both SHARED a brain somehow and one mind was controlling the two bodies then yeah, you'd be the same person since there's only one mind. But that's not really as confusing an idea as clones or teletransportation.

>>

It's not about the ship or axe, per se. It's about the user. In Theseus' Ship, even if all his ship's parts are replaced he is still at the helm of the ship itself. He guides it and that's what makes it his ship. It doesn't need to have the original parts. Same thing with the axe; it's the wielder and his use, the not the pieces of the axe itself.

What makes us intrinsically human is why we will never be "replaced". There's no such thing as a non-human human.

>>

>>595904

>There's no such thing as a non-human human.

I think the question is less whether there are non-human humans and more if there are non-human persons that are the same persons that were once human persons.

>>

>>595902

the original and the clone would be different after that point, yes, but would that mean that at that singular point in time they are the same? and if so how can you conclude which is the original? why are the new memories of one version accepted as "me" and not the memories of the other? basically which version is "me" and why?

>>

>>595904

would you say then that since humans are an end in themselves that they are not subject to a certain instrumental function as the axe or ship is?

>>

>>595926

Honestly, I'm going to go with the Parfit view here and say it doesn't matter if they were the same at that single point. Personal identity is kind of inconsequential in the instance of a single indefinite moment. If you really had to know, by the time you'd be able to do anything in order to tell, or measure it somehow, it'd be over already.

As for who's the original, that's simple. The one who existed first. If you don't know that (maybe they both think they existed first or something) then consider that maybe NEITHER are the original and the original no longer exists.

My point here is it doesn't really matter if you're "you" or not as long as you tick all the right boxes. You have your memories... you have your experiences... you see things from your perspective. So you're you.

If you're asking how an observing third party could possibly know who's you and who isn't, the answer is they can't. I cannot see your mind or anyone else's so for all intents and purposes you could be anybody. You could be my best friend one day and a clone the next. But the question is, does it matter? I think not. That's just my opinion though.

>>

>>595886

Yes!

That's a basic way of phrasing it, and Kant makes that same point in several different ways, depending on what implications he wants to emphasize.

Technically speaking, your conscious mind *is* a string of experiences (really, it's one unified whole that we call "experience"). What your conscious mind can't be is a string of mere mental contents - mere sensations of shapes/colors/sounds/textures/pains/pleasures/desires, or mere images of places and events whether present or in memory - because such mere contents are not self sufficient for a coherent, synthesized consciousness; such contents would be an unintelligible jumble of raw data that couldn't even be known by a self thinking "I am conscious of an incoherent jumble of stuff." It wouldn't be experience of chaos - because true chaos wouldn't yield experience at all.

It would be, as Kant puts it, "less than a dream."

But the fact that you *are* conscious of sense data that are ordered into connections (constituting objects), of and law-governed regularities between these objects (constituting events), you can infer that there is some automatic, spontaneous faculty of your mind that imposes such order on what you sense externally in space and internally in introspection. This ordering faculty allows you understand things because this faculty *is* your understanding - one basic mental function of several whose cooperation yields your consciousness, in Kant's system.

>>

>>595840

>>595886

>>596040

The regularity of the understanding's synthesizing activity is just that "higher" (though we could equally use the analogy "deeper," as in more fundamental) consciousness, that ultimate unity of self, called the transcendental unity of apperception. It this self that does the thinking when you're aware that "I am conscious of my self." The latter "self" in that judgement is your empirical self - the human body you know in outer sense, and the stream of mental states you know in inner sense - and the "I" is your transcendental self. And it is just this "I" that, in consequence, you can never investigate further - because, as the Upanishads ask, to which Schopenhauer would point:

"How can the knower be known?"

>>

The Buddhists take the view that, so long as the parts are replaced one at a time in a continuous span, the ship is still Theseus ship. "We" are not so much an individual phenomenon as a series of phenomenon moving through time, affecting other series of phenomenon just as much as these other series affect us.

When you become the new "you" is every time "the future" becomes "now".

>>

the issue, I feel, is thinking of the concept of "you" or identity in terms of a static present and not so much as a constant becoming or presencing. At this moment Theseus' ship is like this, at this later moment it is like this. It says nothing about the continuous existence through time of the ship, what holds these moments together. This seems to be echoed in being and time in that the "essence" or being of an object is found in authentic temporality and not in a succession of now moments in the "ontic world" You can not describe the radically unique being of an entity by referencing another entity, so you cannot describe the radical uniqueness of the ship by referencing the materials it is made of at a certain moment. Similarly you cannot describe the radically unique self in terms of appearance, occupation, location, interests, hobbies, and so on and so forth. It is the continuation throughout temporality that discloses the radical uniqueness to us.

>>

>>

>>595936

I don't see how the concept of an end-in-itself, belonging much more to ethics, pertains much to the question of personal identity and whether a self can be said to use its body, which belongs much more to metaphysics.

>>

>>590615

That is not the OP. OP is saying that we can replace human parts. Like we can put a new liver after yours has gone to the shitter.

>>

>>596124

This.

Becoming is what reveals Being. And a Being is always Becoming. So until the transformation (existence) of that Being is completed, there are no true parameters in defining Being at this moment in time. All we can say is that it is constantly changing: for life is change. What is Being? Well I think it is the indwelling consciousness - the "soul" or "spirit"

>>596169

You're dumb.

>>

>>595840

>the "consciousness stems from the ability to self-reference" meme

>>

>>598057

what i meant was that if a human is an end in itself then it does not have a "user" nor a particular "use" in the same way that an axe has a use and a user.

>>

>>590387

Even if you can copy thoughts/brain whatever, you'll still be different from the other even a fraction of a second after the copy transfer has happened.

>>

>>598530

ok then offer an alternative, almighty memelord

>>

>>598530

I'm unfamiliar with this meme - could you elaborate on it?

>>

>>590721

>not Locke's Sock

>>

>>595840

But Kant was wrong about everything. I know because reality is necessarily possessed of a particular faculty wherein Kant is wrong. I shall name this faculty 'transcendental Kantian wrongness' and all particular arguments as to Kant's having been right about something will fail at the starting-gate, owing to their being contrary to this transcendental aspect of reality.

>>

>>600026

Okay - can you describe the representations of this faculty, and the forms that govern it, in a way that is equal to or stronger than the way that Kant describes and differentiates the representations of sensibility, understanding, reason, and judgment? Because without providing such an account, this faculty won't be an adequate competitor against the ones that Kant argues for, and doesn't merely name.

>>

>>590387

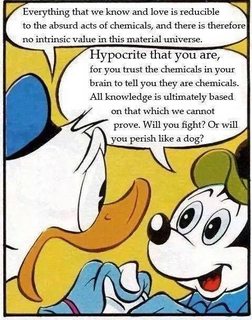

The text along with the kids cartoon just make me laugh at the absurdity

>>

>>600151

There is an additional fundamental faculty of reality, whereby all people disposed to question Transcendental Kantian Wrongness are themselves wrong. This I refer to as 'transcendental Kantian apologist wrongness'.

>>

>>600163

I see what you're trying to do, and it's clever, but My point is that Kant does much, much more than claim "reality just presupposes the features that philosophers have struggled to secure for it," wiping his hands and calling it a day.

Check out the Metaphysical and Transcendental Expositions of the Transcendental Aesthetic of the Critique of Pure Reason, and the Analytic of Principles of the Transcendental Analytic of the same work, for a basic example of the kind of description and argumentation you'd need to provide in order to surpass, or even match, Kant's depth and rigor.

>>

File: crude drawing.png (31KB, 660x529px) Image search:

[Google]

31KB, 660x529px

>>590387

you probably shouldn't worry about it OP, here's why.

>Then the entire contents of my brain is copied to a computer

You're confusing yourself with inadequate comparisons. The hardware/software metaphor makes this very sticky and I think affects your inadvertently dualist frame of reference.

The contents of your brain _are_ your brain, to effectively "Copy" it your brain would have to be reproduced contiguously, effectively a whole-body transplant into something else. They aren't "files", that can be expressed digitally, the human brain functions with a constant interplay of chemical signals.

Think of it as putting on a new pair of pants. The first pair is the "organic" you represented by a pair of khakis, while the new you in a different body is represented by a pair of jeans. If you put one leg in at a time, you're never fully not wearing pants, and you never stop being clothed. When you have both legs in the jeans, however, the khakis stop being yours. Such I imagine it will be with transplantation of consciousness.

>>

File: Nábrókarstafur.jpg (48KB, 500x667px) Image search:

[Google]

48KB, 500x667px

>>600197

Take the Icelandic black-magic method of Nábrókarstafur, or necropants.

>After he has been buried you must dig up his body and flay the skin of the corpse in one piece from the waist down. As soon as you step into the pants they will stick to your own skin. A coin must be stolen from a poor widow and placed in the scrotum along with the magical sign, nábrókarstafur, written on a piece of paper.

>Consequently the coin will draw money into the scrotum so it will never be empty, as long as the original coin is not removed. To ensure salvation the owner has to convince someone else to overtake the pants and step into each leg as soon as he gets out of it. The necropants will thus keep the money-gathering nature for generations.

The pants are never fully empty, and that's how the spell remains uninterrupted.

>>

>>600194

Yeah I'm obviously not serious, but I do have serious issues with transcendental arguments as a class.

>>

>>590387

>Will "I" experience the world from two bodies now, myself and the clone?

No, you are still just your own body. The clone is a separate person.

>Who is truly "me"? Both the original me, and the clone? The clone is so perfect that not even my family can tell the difference.

There are many things in this world that are indistinguishable. Consider leaves or blades of grass. If I burn one leaf, I don't burn all leaves.

> Can i see the world through his eyes?

Do you have some mechanism to transmit the clone's visual senses into your brain?

>>

>>600232

The clone has my brain. It's a carbon copy.

>>

>>600235

So it's an identical twin? Twins don't share the same self-awareness even though they start out with the same genetic material.

>>

>>600232

>There are many things in this world that are indistinguishable. Consider leaves or blades of grass.

These are only indistinguishable to a given degree of granularity in observation, though. A powerful enough microscope will reveal the differences. What he's getting at is the notion of being indistinguishable *in principle* rather than in practice. Here you should go to identical twins or something, not blades of grass.

>Do you have some mechanism to transmit the clone's visual senses into your brain?

See you're begging the question of monism here. I'm not a dualist, but you can't just make the assumption if the other person isn't.

>>

>>600235

The clone occupies a separate volume in space, I assume. Thus we have at least some way to distinguish them. You personally do, at the very least.

>>

>>600256

The person who is truly "you" is the one with the brain you have now. To complete the brain-swap you and the clone will have one leg in both pairs of "pants" (your body and the new body built from scratch), and given a connection to the sense organs of those bodies will experience both of them at the same time.

>>

>>600255

I am simply trying to answer the question from a practical standpoint, he asked if he can "see the world through the eyes" of the clone, I think that's obviously silly. The clone is effectively a separate person with his own senses.

Unless there is some other more poetic interpretation, I don't know.

>>

>>600235

And the milisecond it becomes aware of its surroundings, it is experiencing a different point of view than you are, and very quickly becomes a different person.

>>

>>600214

Fucking necropants. What the hell, Iceland.

>>

File: cruder drawing.png (14KB, 422x523px) Image search:

[Google]

14KB, 422x523px

>>600269

Like this. Given however that this would probably a very complex, painful, expensive surgery, I would hope both of you would be sedated and unable to see during the operation, let alone see.

>>

>>600307

*let alone spea

>>

>>600307

Arguably you have a created a single hybrid frankenstein person with redundant organs..

>>

>>600324

And by putting the right leg in the right trousers you separate the redundant functions, creating two separate people. What's really funny about this is that you could possibly be speaking through another person's mouth, while seeing with your eyes, or vice-versa.

>>

>>592346

As an identical twin, I can safely say you do not made no sense

>>

>>600271

>I think that's obviously silly.

I also think it's unlikely to be the case. But I'm not going to present "I think that's unlikely" as an argument, because it's a shit argument.

He, or at any rate a suitably competent opponent, might speculate that the consciousness experiencing reality through our sensorium is actually immaterial. He might speculate that the procedure he's outlined might lead to the same immaterial consciousness experiencing reality through two distinct sensoria simultaneously.

It doesn't really matter that we think that's silly. What matters is how we can argue against it.

>>

>>600452

it's not gainful to speculate TOO much on the machinations of the brain when medical knowledge is stil lso limited, it's tantamount of trying to understand the visual processing ability of the eye without being able to view tissue on the cellular level

from what is known, I do think it's more reasonable to intuit that the consciousness or mind is a process of the brain that's dependent on its qualities of its structure. like another anon said, do people with alzheimers lose pieces of their immaterial being? or should it be judged as a mechanical failure that breaks down the illusion of a "conscious You" that sits in the brain like a little homunculus, rather than the sum of its parts?

>>

>>600501

>it's not gainful to speculate TOO much on the machinations of the brain when medical knowledge is stil lso limited

This is a fine stance, but it should lead you to not engage people who are doing it, rather than just proclaiming monism by fiat.

>do people with alzheimers lose pieces of their immaterial being?

Think of it as more like a TV signal getting interference, or the lens of a telescope accumulating dirt. It's even possible for the dualist to explain away major personality changes arising from brain traumas in this way, implausible and redolent of special pleading though it may be.

>>

>>600535

The TV signal thing is a great way to substantiate a dualist argument, but you still have to measure the TV signal and show where it comes from

>>

>>600556

The dualist might simply plead inability to offer material access to the immaterial. It is pretty weak shit, I'll grant.

>>

>>599893

Read the meme book GEB. Basically it says that since Godel's sentence is self-referential it must be the case that shit that we can't explain (like consciousness) is related to self-referentiability.

Also he throws in more memes like Escher's recursive drawings and Bach's fugues.

>>

>>600197

How do you take off your living organic pants? Do you fry your original brain? I get you can be both an organic and a mechanical entity, but what about after that?

>>

>>600580

the "pants" that you discard would conceivably be everything that isnt your visceral brain, and depending on the technology we don't have yet, everything that isn't your fore-brain and frontal lobes.

>>

>>600214

Daily reminder that northern europeans are barbarians and subhuman

>>

>>596124

Are you saying that an object is not only the sum of its parts, but the sum of its parts throughout every point in time of its existence?

>>

>>600648

the idea is that the being of an object cannot be explained by referencing its parts, but rather its being through time. the being of the ship cannot be explained by referencing its certain planks, or wheel, or masts but rather the ship's past, present, and future existence all working together at the same time. The radically unique "me" is not merely a sum of having brown hair, being a white heterosexual male, being a certain height or weight, my name, my interests what I study, or where I live. These are not essential to my very being but are all accidental to my existence. Temporality, however, provides the horizon of understanding through which I can understand my radical uniqueness.

>>

>>600363

If consciousness is encoded by brain structure, you should be able to make another brain that has your consciousness by correctly mimicking your brain structure. Since identical twins have just about the genotype, you would think the brains would be quite similar in structure, at least in a vacuum without external influence. Yet twins have separate consciousnesses.

>>

File: Picture 3.png (127KB, 361x432px) Image search:

[Google]

127KB, 361x432px

>>600216

What issues do you identify? I wouldn't be surprised if I sympathize with them.

>>

File: ghost_in_the_shell.jpg (160KB, 1137x640px) Image search:

[Google]

160KB, 1137x640px

Ya'll muthah fuckas need to watch some Ghost in the Shell or something.

In anycase, most folks make this much more difficult than it is by adding a "soul" to the equation. ...and it's hard enough without that, given that you also gotta contemplate non-existence, and how the hell your personal consciousness works.

>>

>>601283

>If consciousness is encoded by brain structure

it can be, but humans don't exist in a vacuum, and brain structure itself is influenced by environment and the storage of different memories, even on the molecular level by ambient radiation

for identical twins to share the same awareness they'd have to occupy the same physical space, otherwise they'd be aware of different things

>>

>>601456

Yes, but wouldn't those same problems exist in the experiment of transferring your neurons to a computer in an attempt to be immortal?

>>

Seems like the second law of thermodynamics will render computers fucked too, in the long long long long long term. Maybe there's some benefit to extending your consciousness a few millenia, but either today or tomorrow, you'll have to reconcile yourself with the inevitability of your annihilation.

Unless we can alter the very laws of physical existence, but that strikes me as slightly too optimistic.

>>

>>602449

>Seems like the second law of thermodynamics will render computers fucked too

And not our organic brains? What the hell are you even arguing mate, that somehow consciousness is above the laws of physics?

Fuck outta here with your high school understanding.

>>

>>602613

1. Take it easy. Breathe.

2. Think about where I remotely implied that our brains are immune from entropy.

3. Before you type a response, recall the word that you yourself quoted from my post:

> too

>>

File: cosmos.jpg (119KB, 900x505px) Image search:

[Google]

119KB, 900x505px

This scenario is very presumptuous and is contingent on "you" existing at all. Identity doesn't actually exist. It's just a human concept. The keyboard I'm typing on is really just a collection of atoms, that are currently arranged so that they constitute what we refer to as plastic. Whether we call it a keyboard or a dildo makes little difference. It serves its purpose, and if we call it a keyboard we all know what we're talking about.

The only boundaries between you and the external universe are the ones you create. This is kind of what the buddhists were getting at when they said "everything is one". Not that the entire universe is actually one single thing. But that in the same way cells are made of molecules, which are made of atoms, which are made of sub-atomic particles, which are made of quarks, which are made of strings and waves, and apparently nothing at all. You can take this in the opposite direction as well. Organs make up organisms, which make up ecosystems, then planets, then solar systems and galaxies, and universes.

So "you" is really just where your perception is focused. There isn't really a "you" in reality. We're just a link in a web, that doesn't really exist.

>>

>>591931

>atomic facts

Now that sounds sexy as fuck

>>

>>602732

Damn you're right I'm letting stupid shit on the internet get to me again Here's everything spelled out:

Given the topic of this thread, I inferred that you're making some sort of argument about consciousness vs. AI.

You then implied that the second law has any bearing on how robotics will develop, and therefore make AI unable to attain the same level of consciousness as our organic brains.

But it's fact that our brains (or whatever processes in it that manifests consciousness) must also be subjected to the laws of physics, in particular the second law.

This makes your point irrelevant, since whatever should obstruct AI's from having any sort of consciousness wouldn't be the second law.

You follow?

>>

Imagine now or in the near future, that there are computers that intentionally fail the Turing test because they understand its parameters and don't wish to compromise their sentience.

>>

>>602813

o shit.............. someone e-mail musk with this new info

>>

>>602797

No worries anon - I see the conclusion you drew, but it's not one I was writing about. I know that insults and exagerrations are often the legal tender of this site, but it saddens me when it gets in the way of otherwise intelligent, enriching, courteous exchanges. Thank you, sincerely, for recognizing your error (we're all human (for now)), since that kind of humility is what will help us enjoy great threads in the future.

>>

>>602866

>but it's not one I was writing about

That's bizarre, since this thread was about consciousness and AI after all. Could you tell me which leap of logic was inappropriate? And if there are any, why you posted what you did in this thread?

>>

>>602889

I was responding to the post directly above my original that you responded to, regarding the idea of AI as a potential source of immortality. The leap would be the assumption that because I claimed that computers were vulnerable to entropy, I'd somehow claim that brains were not. But, as you recognized, that wasn't my claim.

>>

>>602970

So you forgot to quote the post you were responding to.

Sorry about that, carry on.

>>

Identity is a synthesis of form and relation.

>>

>>602992

>t. hegel

>>

>>602770

Dude... time to drop the bong.

>>

>>600452

Well, if there is some "mind" out there in the aether who receives information transmitted via the "portal" of the brain, and you just instantly created a duplicate brain, perhaps the "soul" now starts getting two feeds, presuming that this copy of the brain counts as a second portal linked to the same mind.

I don't actually believe this but for the sake of argument this could be how it works.

>>

You know, people always laughed when Christians say "animals don't have souls, blacks dont have souls, etc" They are completely correct in that statement. Only part they got wrong as "we have souls". Which can easily be rectified. So technically Christians were ahead of the western curve. The liberal christians might say "everyone has a soul, even animal" but they are just more stupid.

>>

>>602992

> form and relation.

What would you say is the difference?

>>

We die every second as soon as a single part of our body changes. Some changes are more drastic than others but even the smallest alteration of our bodies changes who we "are."

>>

>>602770

Textbook Zen.

>>

>>

>>607867

The self is a relation which relates itself to its own self, or it is that in the relation that the relation relates itself to its own self; the self is not the relation but that the relation relates itself to its own self.

>>

>>607882

Lort-post

>>

>Now with the modern medicine and science, we can replace most human parts.

>with the modern medicine and science

Just "with the modern medicine and science"? This isn't a new thing; it's not like you need prosthetic augmentation to start encountering this problem. Save for tooth enamel, all the atoms in your body are recycled in less than a decade. Assuming you aren't underage, your substance has been completely replaced twice over.

Just separate form and substance. If I replace all the atoms in a "triangle," it's still the triangle. You are the idea, not the material. It's just a matter of what idea people think people are. Personally, I figure people are judged by their decisions and that decision making capability is what divides individuals, and therefore claim people are their will. Though then you get into determinism arguments.

>>590631

>>597215

No, I'm pretty sure about the tooth enamel exception, but that's obviously not some "resting place for the soul" or anything.

>>602770

>Identity doesn't actually exist. It's just a human concept.

Do concepts not exist? I feel like Plato has a bone to pick with you, anon.

>>

>>590387

you're 12, go to bed

>>

>>590387

the clone will kill you because there can only be one, anon

>Then the entire contents of my brain is copied to a computer

Oh my goodness

>Will "I" experience the world from two bodies now, myself and the clone?

Obviously no. If I clone my cellphone, it's not like they'll all have the same image on their camera. There are loads of duplicates of my phone which all take in different information from different locations. What would suggest a clone of a person would be any different?

>>

>>590317

I've thought about this before, and the conclusion I've come to is this; the ship still has the same crew. If you are replacing parts of the ship "one plank at a time," as you might say, the ship is still manned by the same crew.

Some take this to mean a "soul," but I prefer to think of it as the electrical signals in your brain. As long as you can send impulses to one area of your brain, and you replace a different area of the brain with something that can similarly process those electrical impulses, then there is essentially no difference, because you maintain continuity, but with added functionality.

Now, individually replacing every neuron in your brain with a synthetic neuron is likely not feasible; the time it would take to replace the entire brain would likely be more than a human lifetime. Some solutions I've heard to this are using nanobots in the bloodstream to slowly implement themselves all over the brain over a set, shorter period of time, or to replace whole sections at a time with synthetic parts, but that might decrease the actual "processing power" of the brain and reduce our cognitive functionality.

Regardless, this problem HAS a solution; the issue is, how do we get there?

>>

>>607867

It's more an issue of the passage of time than anything else. I can technically say that the "me" that existed 6 seconds ago is dead because that "me" is no longer experiencing reality as it is now; the "me" that exists RIGHT NOW is, as in, this particular frame of time.

>>

>>607981

> I can technically say that the "me" that existed 6 seconds ago is dead because that "me" is no longer experiencing reality as it is now; the "me" that exists RIGHT NOW is, as in, this particular frame of time.

> experiencing

Sure, if your technical definition of "me" is based on the material constituents of your body; but the question is whether it's better to define "me" as something besides those mere bits of matter, since they aren't continuously unified, but my "experience" is.

>>596040

>>

>>608016

Those are electrical impulses. Beautiful and awe-inspiring and are responsible for our every thought and feeling, but still merely electrical impulses that come and go, that pass with the passage of time. They are generated by those "bits of matter," but they are still bound to your physical body and are continuous, and compose what you would call your experience.

>>

>>607977

but you could make the same argument about the crew. you slowly replace crew members until none of the original ones are left. this would mean that there isnt a continuity. when you talk about electrical signals in the brain it sounds like you are saying the individual members of the crew dont matter as long as there is a crew, but that could be said of all the other parts of the ship.

>>

>>607981

>>608016

>>607981

And I realize that you're emphasizing the passage of time rather than the fleeting materials that constitute the human body; but if your self perished at every moment of time, and a new "you" came into being at every following moment, then all you'd have is many different, fragmented selves that are individually conscious, but could never together constitue a unified whole of continuing conscious experience.

But you remember the past few minutes, and you have memories of the days before that, and these are unified with your consciousness is also currently continuing over the many moments that are elapsing; this is one cohesive whole, one "self," one "I," and this demonstrates (so the Kantian argument goes) that you have a self that is more fundamental than all the fleeting moments of time, and all the scattering/replacing bits of matter that make up your body. Otherwise, there would only be unconnected atomic bits of consciousness arising and perishing with the flow of time, with whatever sensations they might have perishing along with them, contrary to what you experience.

>>

>>608071

this discussion comes back to what i tried to say earlier>>596124

1. there is an issue with thinking about time as a continuous series of now moments, one following the other, rather than as a duration. Instead of looking at myself 6 seconds ago and comparing it to myself now the focus should be on my duration or persistence through temporality.

2. Following from Heidegger, he presents the ontological difference which, paraphrasing here, is "the being of an entity is not itself another entity, but being is always the being of an entity" The radically unique being, or existence, of the self, or the ship, cannot be defined or reduced to another physical entity. The physical material that make up the body does not explain or disclose the nature of our being.

Now of course I am biased since I just finished course on Being and Time, but I feel like this view gets around these questions of the materiality of the self, or in this case the ship. One should look at how the ship exists in terms of the past and the future and how those disclose the ship in the present. All three elements of temporality are working together simultaneously.

>>

>>608104

I haven't engaged with Heidegger yet - I'm a long ways off, in fact - but having spent a lot of time with Kant, I hope to be well prepared.

Thread posts: 124

Thread images: 20

Thread images: 20